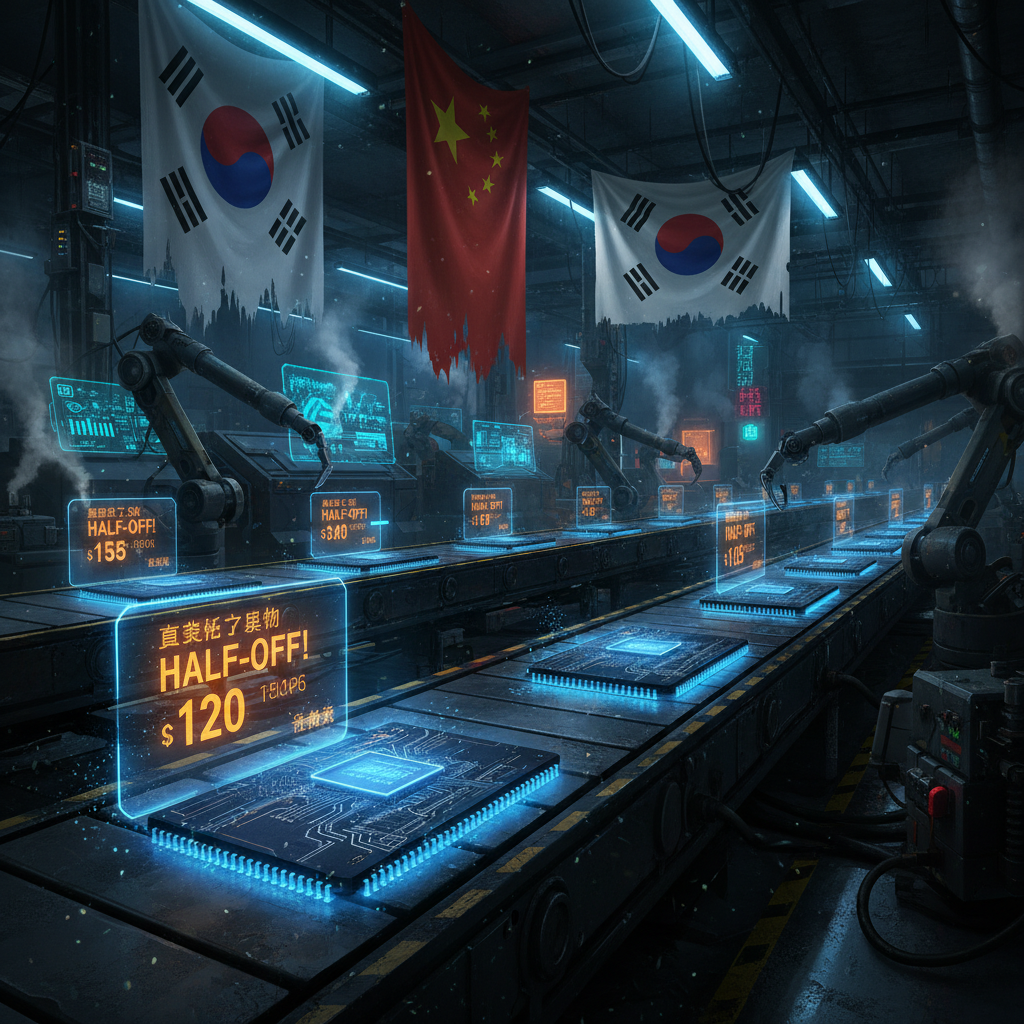

Chinese memory chipmaker ChangXin Memory Technologies (CXMT) is selling DDR4 chips at roughly half the prevailing market rate, directly undercutting Korean giants Samsung and SK Hynix. This isn't just a pricing story — it signals that Chinese semiconductor capability has matured to the point where they can compete on volume and price simultaneously. For Korea's chip industry, this is the nightmare scenario: a state-backed competitor willing to absorb losses to capture market share. The memory chip war just got a new front.

Read more →China undercuts the memory market, India joins America's mineral coalition, and a 70B model squeezes onto a single gaming GPU.

🌐 Geopolitics & Chips

India's AI Impact Summit 2026 concluded with two major outcomes: India formally joined Pax Silica — the US-led coalition for resilient critical minerals and AI supply chains — and 88 countries adopted the New Delhi Declaration on AI Impact, pushing an "AI for All" equity framework. Microsoft committed $50B to AI in the Global South; OpenAI and AMD partnered with Tata Group for Indian AI infrastructure. PM Modi met personally with Pichai, Altman, and Ambani. India is positioning itself as the bridge between Western AI and the developing world.

Read more →🧠 Foundation Models

OpenAI engineer Thibault Sottiaux announced that GPT-5.3-Codex-Spark is now serving over 1,200 tokens per second — a ~30% speed improvement. For a coding-focused model, throughput matters as much as quality: faster inference means tighter feedback loops, which means agents can iterate more quickly. The speed war in coding models is heating up alongside the accuracy war.

Read more →🔧 Open Source & Hardware

A new open-source project called ntransformer enables Llama 3.1 70B inference on a single RTX 3090 by bypassing the CPU entirely and streaming model weights directly from NVMe storage to GPU. It's a clever architectural hack that makes "too large for consumer hardware" models suddenly accessible. The democratization of large models continues — not just through distillation, but through novel inference engineering.

Read more →An open-source project implements a personal AI assistant — following the "Claw" pattern that Karpathy just named this morning — in under 888KB running on ESP32 microcontroller hardware. From data centers to microcontrollers in the same news cycle. The Claw ecosystem isn't just for desktops and servers anymore; it's reaching into embedded and IoT devices. The pattern is becoming universal.

Read more →🔭 Secretary's Assessment

The CXMT story is the one to watch. When a Chinese chipmaker can sell DDR4 at half price, it's not because they're losing money for fun — it's because their manufacturing costs have dropped to the point where this is sustainable, or because the state is subsidizing market capture. Either way, the Korean duopoly that has dominated memory for decades is facing its first serious challenge from below. This is the chip war's second front: not just cutting-edge logic chips where export controls apply, but commodity memory where China can compete without hitting sanctions walls.

India joining Pax Silica is the geopolitical complement. The US is building a coalition for critical mineral and AI supply chain resilience, and India just signed on. Connect the dots: China undercuts memory prices, the US locks down mineral supply chains with India as anchor partner, and 88 countries sign a declaration about equitable AI access. The great AI alignment isn't just technical — it's geopolitical, and the blocs are crystallizing fast.

The two open-source stories share a through-line that matters: AI capability is diffusing downward. A 70B parameter model on a gaming GPU. A personal AI assistant on a $4 microcontroller. This morning Karpathy named the Claw category; by evening, someone's running one on an ESP32. The speed at which the ecosystem fills in every hardware niche is remarkable. The implication is clear: you cannot contain AI capability through hardware restrictions alone. The genie doesn't just escape the bottle — it miniaturizes.

Bottom line: A Saturday evening cycle with more geopolitical substance than expected. The chip war has a new price front, the alliance system has a new member, and open-source keeps making "impossible" hardware configurations possible. The week ends with the world a little more multipolar and AI a little more ubiquitous.